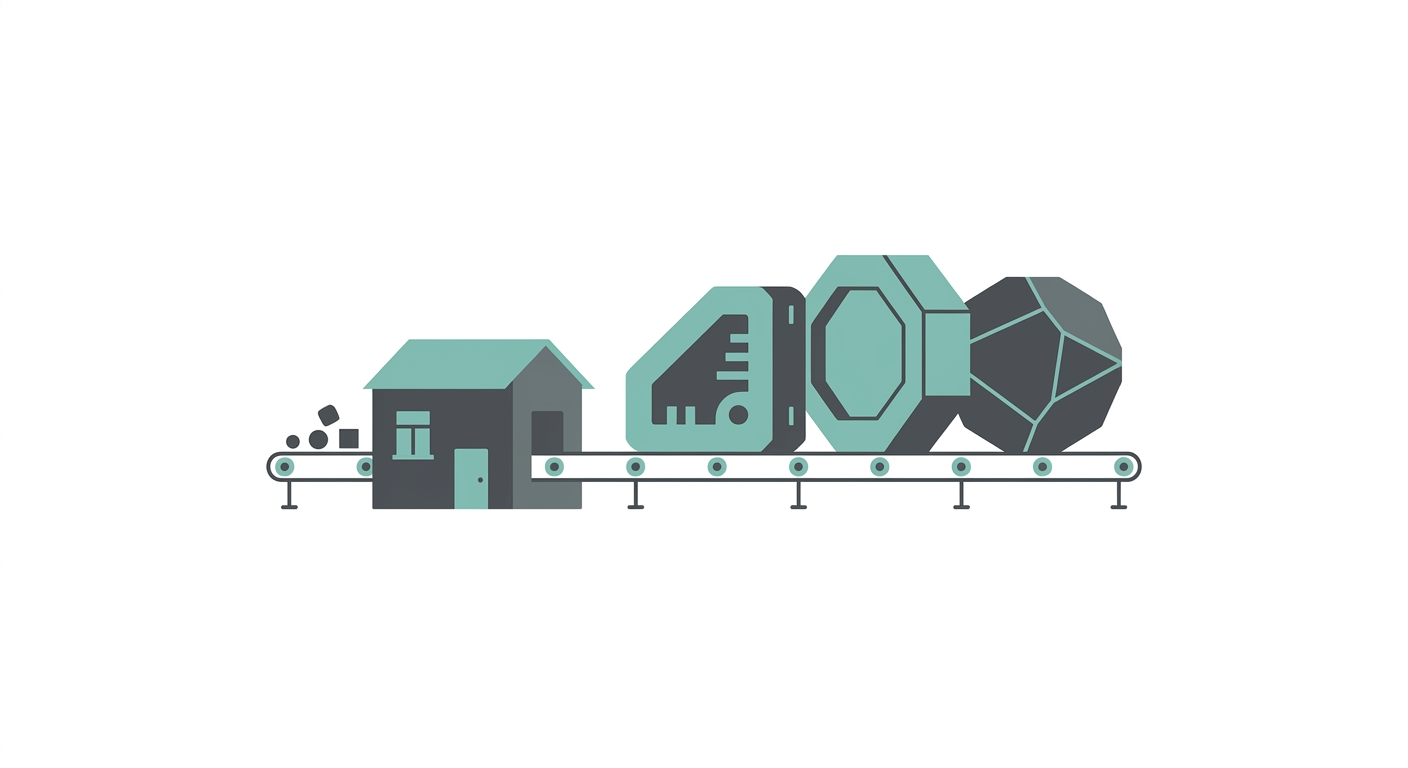

Blueprints for the 10-Person Unicorn

Expert Knowledge

Venture Studio Model(Based on operational frameworks used to deploy capital at Scalable Ventures)What Does the New Math of AI-Native Valuation Look Like?

- Headcount: ~10 Full-Time Employees (FTEs)

- Revenue: $100M+ ARR

- Revenue/Employee: $10M+

Who Gets a Seat at the 10-Person Table?

1. The CEO (Vision & Capital)

2. The CTO (System Architect)

3-8. The "Full-Stack Architects" (6 Seats)

- Prompt-engineer complex agentic workflows.

- Deploy infrastructure.

- Debug model hallucinations.

- Understand the business logic.

- They don't write boilerplate; they review AI-generated code.

9. The Product Lead (User Empathy)

10. The "Flow Engineer" (The New Ops)

What Roles Disappear Entirely?

- SDRs: Replaced by outbound AI agents that can personalize email at infinite scale.

- Customer Support: Replaced by RAG-based chatbots that solve 95% of queries instantly.

- Middle Management: No people to manage means no need for managers.

How Do You Replace Payroll with an Agentic Stack?

| Traditional Function | AI Native Replacement | Cost Difference |

|---|---|---|

| Outbound Sales Team | Clay + OpenAI API | 10x Cheaper |

| Content Marketing | Perplexity + Custom LLM Pipelines | 20x Cheaper |

| L1/L2 Support | Intercom Fin / Custom RAG | 5x Cheaper |

| Data Analysis | Code Interpreter / Julius | 100x Cheaper |

What Are the Real Execution Risks, and How Do You Mitigate Them?

How Do You Transition from Traditional to AI-Native?

How Do You Hire for the 10-Person Model?

Conclusion: The Founder's Choice

Related Reading

- AI Tools Running My Companies - Real AI tools generating ROI today

- AI Strategy for CEOs - Strategic framework for AI adoption

- The Venture Studio Model - How we build capital-efficient companies

- B2B SaaS Scaling Playbook - Scale from $1M to $10M ARR

Build the New Way

- See our portfolio: Explore companies built with this model at Scalable Ventures

- Access frameworks: Download AI implementation and scaling tools

- Strategic advisory: Learn about my advisory services for AI-native founders

- Get in touch: Reach out to discuss building together